Overview

The Biomimetic Core that runs on-board MIRO is an implementation of some elements of Consequential Robotics' 3B-CS (Biomimetic Brain-Based Control System). 3B-CS is a collection of computational models based on components of the mammalian brain.

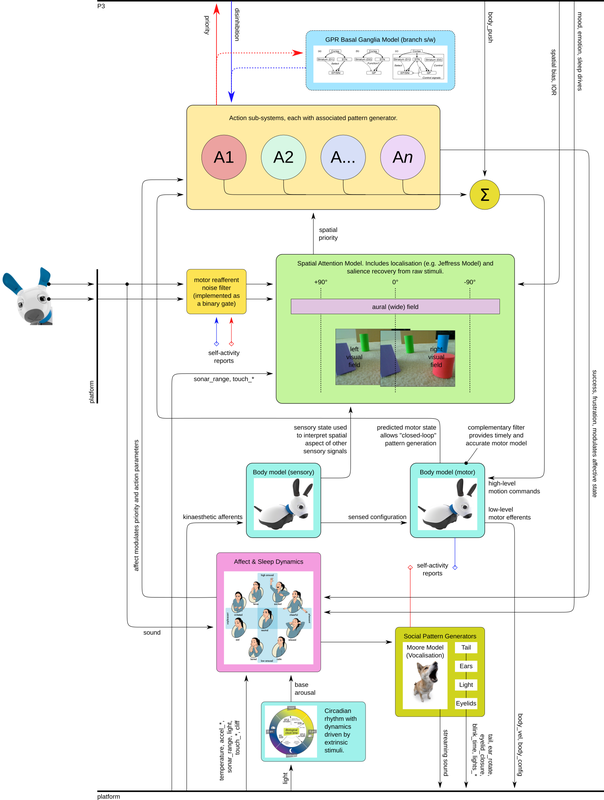

System diagram

Elements

Spatial Attention

The simple spatial attention model computes spatial salience across the region covered by both vision sensors (eyes) and the wider field of view covered by the ears. Salience is driven by movement and intensity, and all sensory information is fused into a single salience map, from which the maximum can be selected to indicate the current "most salient region" of space.

Basal Ganglia

The Basal Ganglia model (BG) acts as a centre for action selection with hysteresis and persistence. Action sub-systems, shown, each compute a priority (between zero and one), and the BG selects, at each time step, which action sub-system is disinhibited, and thereby is able to take control of the plant (primarily, MIRO's kinematic chain). The BG is described in Gurney et al. (2001).

Action sub-systems

Action sub-systems can be implemented in user code in P3; the following are built in to P2. These actions are central to MIRO's demonstrator mode: they comprise simple behaviours driven by simple triggers, and do not represent a sophisticated, nor complete, suite of behaviour. Nonetheless, these few simple actions are sufficient to generate engaging overall behaviour.

Mull

Named for historical reasons, "mull" is the idle action, and has a fixed low priority, taking control of the plant if nothing else is pressing.

Halt

"Halt" is an action that interrupts any other action if conditions arise that raise its priority (such as one or other of the motors of the kinematic chain being stalled as MIRO tries to drive its head into something it shouldn't). This action is not biomimetic, but serves to protect the robot to a degree from its own errors. MIRO will close its eyes for a couple of seconds to indicate it is performing this action.

Orient

Orienting is close to being MIRO's default behaviour—unless conditions are right for one of the more active actions (approach or flee), MIRO's behaviour consists of a series of orients to salient regions in space. The action involves turning the head, which sometimes includes turning the whole body, so that the centre of MIRO's gaze is pointing at the targeted location.

Approach

Under the right conditions, MIRO will approach the salient region in space, rather than just turning towards it. MIRO does not attempt to judge distance to any great accuracy, but will make a very rough estimate and then move part of the way towards the salient location. Approach is very much favoured when MIRO is in a good mood, and dis-favoured when he is in a poor mood.

Flee

Flee is the counterpart to approach—in a poor mood, MIRO will flee from salient regions as he would approach them were he in better humour. MIRO will turn away from the targeted location and "run" some distance in more-or-less the opposite direction.

Retreat

Retreat, like halt, is a more robotic than biological action: if either of the actions that involve body translation (approach and flee) are halted by a wheel stall, the priority for retreat is raised temporarily and the action causes MIRO to reverse recent wheel motion to move away, perhaps, from the obstacle that caused the stall.

Motor Pattern Generator

A key part of MIRO's connection with its biological roots is the way that actions are expressed through a motor pattern generator (MPG). High-level motor commands output by the action sub-systems comprise, in most cases, velocity vectors for a point a little in front of MIRO's nose. MIRO's MPG computes the changes required in the kinematic chain, as well as in the location of MIRO's footprint, to support that drive signal. Those changes are then sent to P1 for implementation by lower-level controllers. The details of the MPG are not yet published.

Details

A description of the details of the system model pictured above is in preparation. In the meantime, many of the key elements of the system have been discussed in some form in the scientific literature—publications covering these elements are listed below.

A computational model of action selection in the basal ganglia. I. A new functional anatomy, K. Gurney, T. J. Prescott, P. Redgrave, 2001.

Whisker Movements Reveal Spatial Attention: A Unified Computational Model of Active Sensing Control in the Rat, B Mitchinson and T J Prescott, 2013.

A model of saliency-based visual attention for rapid scene analysis, L. Itti, C. Koch, E. Niebur, 1998.

A Real-Time Parametric General-Purpose Mammalian Vocal Synthesiser, R. K. Moore, 2016.

The circumplex model of affect: An integrative approach to affective neuroscience, cognitive development, and psychopathology, J Posner, J A Russell, B S Peterson, 2005.

SCRATCHbot: Active Tactile Sensing in a Whiskered Mobile Robot, Martin J. Pearson, Ben Mitchinson, Jason Welsby, Tony Pipe, Tony J. Prescott, 2010.

Adaptive Cancelation of Self-Generated Sensory Signals in a Whisking Robot, Sean R. Anderson, Martin J. Pearson, Anthony Pipe, Tony Prescott, Paul Dean, John Porrill, 2010.